Chapter 6 Data Manipulation

In this chapter we introduce the tidyverse. The tidyverse consists of about a dozen R packages designed to work together, organized around common principles. The central organizing principle is that data should be tidy: Data should be rectangular, each row should correspond to one observation, and each column should corresponds to one observed variable. Data should not be stored in the names of variables.

The tidyverse tools we will use is in this chapter, in order of amount used, are:

- the

dplyrpackage (pronounced “dee - ply - er”). This is the main package that we are learning about in this chapter, - the

stringrpackage for basic string operations, - the

tidyrpackage for thepivotfunctions, - the

lubridatepackage for dealing with times and dates.

To use these tools, you will need to install them. The simplest method is to install the entire tidyverse package, with

Then you can load all the tidyverse tools at once with

Alternately, you can choose to be more selective and install and load each package as you need them. This chapter requires dplyr28:

The advantage to using individual packages is that the tidyverse package is large and has many effects you may not understand.

Using dplyr by itself keeps change to a minimum, and also helps you to learn the correct location of the tools you learn in this chapter.

6.1 Data frames and tibbles

Data in R is usually stored in data frames, with one row per observation and one column per variable.

The tidyverse tools work naturally with data frames, but prefer a new data format called a tibble.

Sometimes tidyverse tools will automatically convert data frames into tibbles. Or, you can make the conversion

yourself using the as_tibble function:

## # A tibble: 32 x 11

## mpg cyl disp hp drat wt qsec vs am gear carb

## <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl>

## 1 21 6 160 110 3.9 2.62 16.5 0 1 4 4

## 2 21 6 160 110 3.9 2.88 17.0 0 1 4 4

## 3 22.8 4 108 93 3.85 2.32 18.6 1 1 4 1

## 4 21.4 6 258 110 3.08 3.22 19.4 1 0 3 1

## 5 18.7 8 360 175 3.15 3.44 17.0 0 0 3 2

## 6 18.1 6 225 105 2.76 3.46 20.2 1 0 3 1

## 7 14.3 8 360 245 3.21 3.57 15.8 0 0 3 4

## 8 24.4 4 147. 62 3.69 3.19 20 1 0 4 2

## 9 22.8 4 141. 95 3.92 3.15 22.9 1 0 4 2

## 10 19.2 6 168. 123 3.92 3.44 18.3 1 0 4 4

## # … with 22 more rowsAs usual, this does not change mtcars into a tibble unless we store the result back into the variable via mtcars <- as_tibble(mtcars). Most of the time, you don’t need to worry about the difference between a data frame and a tibble, but tibbles have

a couple of advantages over data frames:

Printing. By now you have probably noticed that if you print a data frame with thousands of rows, you get thousands of rows of output. This is rarely desirable. Printing a tibble will only show you the first 10 rows of data, and will shorten or remove columns if they do not fit in your output window. Printing a tibble also shows the size of the data and they types of the variables automatically, as you can see in the example above.

Type consistency. If you select two columns of a tibble, you get a tibble. If you select one column of a tibble, you get a tibble. This is type consistency. Data frames do not have this behavior, since selecting two columns gives a data frame but selecting one column gives a vector:

## am gear carb

## Mazda RX4 1 4 4

## Mazda RX4 Wag 1 4 4

## Datsun 710 1 4 1

## Hornet 4 Drive 0 3 1

## Hornet Sportabout 0 3 2## [1] 4 4 1 1 2In this chapter, we will primarily use a data set consisting of user generated numerical ratings of movies. This data comes from the web site MovieLens29 (movielens.org), a non-commercial site for movie recommendations. The data is collected and distributed by GroupLens research of the University of Minnesota and made available for research and educational use.

The MovieLens data contains the variables userId, movieId, rating, timestamp, title and genres. The data set consists of 100,836 observations taken at a random starting point, and includes

the reviews of 610 consecutively numbered users.

In addition to being available on the MovieLens web page, we have made it available in the package fosdata associated with this book. If you haven’t yet done so, you will need to install fosdata as follows.

install.packages("remotes") # if you don't already have this package

remotes::install_github(repo = "speegled/fosdata")We recommend using fosdata::<data_set> to reference data sets individually, rather than loading them all with library(fosdata).

Let’s convert it to a tibble so that when we print things out to the screen, we aren’t overwhelmed with information.

## # A tibble: 100,836 x 6

## userId movieId rating timestamp title genres

## <int> <int> <dbl> <int> <chr> <chr>

## 1 1 1 4 964982703 Toy Story (199… Adventure|Animation|…

## 2 1 3 4 964981247 Grumpier Old M… Comedy|Romance

## 3 1 6 4 964982224 Heat (1995) Action|Crime|Thriller

## 4 1 47 5 964983815 Seven (a.k.a. … Mystery|Thriller

## 5 1 50 5 964982931 Usual Suspects… Crime|Mystery|Thrill…

## 6 1 70 3 964982400 From Dusk Till… Action|Comedy|Horror…

## 7 1 101 5 964980868 Bottle Rocket … Adventure|Comedy|Cri…

## 8 1 110 4 964982176 Braveheart (19… Action|Drama|War

## 9 1 151 5 964984041 Rob Roy (1995) Action|Drama|Romance…

## 10 1 157 5 964984100 Canadian Bacon… Comedy|War

## # … with 100,826 more rows6.2 dplyr verbs

The dplyr package is organized around commands called verbs. Each verb takes a tibble (or data frame) as its first argument, and possibly additional arguments.

The first verb we will meet is filter, which forms a new data frame consisting of rows that satisfy certain filtering conditions:

Here we create a new data frame with all 218 reviews of the 1999 film “Fight Club”:

## # A tibble: 218 x 6

## userId movieId rating timestamp title genres

## <int> <int> <dbl> <int> <chr> <chr>

## 1 1 2959 5 964983282 Fight Club (1… Action|Crime|Drama|T…

## 2 4 2959 2 945078528 Fight Club (1… Action|Crime|Drama|T…

## 3 10 2959 0.5 1455356582 Fight Club (1… Action|Crime|Drama|T…

## 4 15 2959 2.5 1510571747 Fight Club (1… Action|Crime|Drama|T…

## 5 16 2959 3.5 1377476874 Fight Club (1… Action|Crime|Drama|T…

## 6 17 2959 4.5 1305696867 Fight Club (1… Action|Crime|Drama|T…

## 7 18 2959 4.5 1455049351 Fight Club (1… Action|Crime|Drama|T…

## 8 19 2959 5 965703109 Fight Club (1… Action|Crime|Drama|T…

## 9 21 2959 2 1441392954 Fight Club (1… Action|Crime|Drama|T…

## 10 22 2959 3.5 1268726211 Fight Club (1… Action|Crime|Drama|T…

## # … with 208 more rowsObserve the use of == for comparison. In R (and most modern programming languages), there are some fundamental comparison operators:

==equal to!=not equal to>greater than<less than>=greater than or equal to<=less than or equal to

R has others (such as %in% to tell if a value is in a vector), but these six are the most useful.

Here are two more examples of the filter command. Find all user Ratings of 1 or less:

## # A tibble: 4,181 x 6

## userId movieId rating timestamp title genres

## <int> <int> <dbl> <int> <chr> <chr>

## 1 1 3176 1 964983504 Talented Mr. Ripley, … Drama|Myster…

## 2 3 31 0.5 1306463578 Dangerous Minds (1995) Drama

## 3 3 527 0.5 1306464275 Schindler's List (199… Drama|War

## 4 3 647 0.5 1306463619 Courage Under Fire (1… Action|Crime…

## 5 3 688 0.5 1306464228 Operation Dumbo Drop … Action|Adven…

## 6 3 720 0.5 1306463595 Wallace & Gromit: The… Adventure|An…

## 7 3 914 0.5 1306463567 My Fair Lady (1964) Comedy|Drama…

## 8 3 1093 0.5 1306463627 Doors, The (1991) Drama

## 9 3 1124 0.5 1306464216 On Golden Pond (1981) Drama

## 10 3 1263 0.5 1306463569 Deer Hunter, The (197… Drama|War

## # … with 4,171 more rowsAll reviews of 1 or less for Fight Club:

## # A tibble: 3 x 6

## userId movieId rating timestamp title genres

## <int> <int> <dbl> <int> <chr> <chr>

## 1 10 2959 0.5 1455356582 Fight Club (1… Action|Crime|Drama|Th…

## 2 153 2959 0.5 1525548681 Fight Club (1… Action|Crime|Drama|Th…

## 3 308 2959 0.5 1421374757 Fight Club (1… Action|Crime|Drama|Th…It turns out there are only three users in this data set who really disliked Fight Club!

Now that we have some basic idea of how verbs work, let’s look at an overview of some of the ones that we will use in this chapter. We will use the following verbs regularly when working with data:

filter()forms a new data frame consisting of rows that satisfy certain filtering conditions.select()forms a new data frame with selected columns.arrange()forms a new data frame with row(s) arranged in a specified order.top_n()filters the top n rows according to some ranking.summarize()summarizes a data frame into a single row.distinct()collapses identical data to produces a single row for each distinct valuemutate()creates new variables by computation.

Here are examples of how to use dplyr verbs with the MovieLens data.

- Select the columns rating and movieId (in that order):

select(movies, rating, movieId) - Arrange the ratings by the date they were reviewed:

arrange(movies, timestamp) - Arrange the ratings in descending order of rating:

arrange(movies, desc(rating)) - Find the last five reviews (by timestamp) in the data:

top_n(movies, n=5, timestamp) - Filter to find all the half-star ratings:

filter(movies, rating != round(rating)) - Summarize by finding the mean of all ratings in the data set:

summarize(movies, mean(rating)) - Form a data frame consisting of the unique User ID’s.

distinct(movies, userId) - Mutate the timestamp to a human readable date and time in the new variable called

when:

mutate(movies, when = lubridate::as_datetime(timestamp))

Note that this uses a function fromlubridatepackage, part of the tidyverse.

6.3 dplyr pipelines

Verb commands are simple, but are designed to be used together to perform more complicated operations.

The pipe operator is the dplyr method for combining verbs.

Pipe is the three-character symbol %>%, which you can also type using the three key combination

ctrl-shift-m. Pipe works by taking the value produced on its left and feeding it as the first argument of the function on its right.

For example, we can sort ratings of the 1958 movie “Vertigo” by timestamp using arrange and filter together.

## # A tibble: 60 x 6

## userId movieId rating timestamp title genres

## <int> <int> <dbl> <int> <chr> <chr>

## 1 385 903 4 865023813 Vertigo (19… Drama|Mystery|Romance|T…

## 2 171 903 5 866905882 Vertigo (19… Drama|Mystery|Romance|T…

## 3 372 903 3 874414948 Vertigo (19… Drama|Mystery|Romance|T…

## 4 412 903 4 939115095 Vertigo (19… Drama|Mystery|Romance|T…

## 5 597 903 3 940362409 Vertigo (19… Drama|Mystery|Romance|T…

## 6 199 903 3 940379738 Vertigo (19… Drama|Mystery|Romance|T…

## 7 383 903 4 943571272 Vertigo (19… Drama|Mystery|Romance|T…

## 8 554 903 5 944898646 Vertigo (19… Drama|Mystery|Romance|T…

## 9 45 903 4 951756950 Vertigo (19… Drama|Mystery|Romance|T…

## 10 59 903 5 953610229 Vertigo (19… Drama|Mystery|Romance|T…

## # … with 50 more rowsThis has the same effect as the harder to read command:

With pipelines, we imagine the data flowing into the pipe (movies) then passing through the verbs in sequence, first being filtered and then being

arranged. Pipelines also make it natural to break up long commands into multiple lines after each pipe operator, although this is not required.

Find the mean30 rating of Toy Story, which has movieId==1.

## # A tibble: 1 x 1

## `mean(rating)`

## <dbl>

## 1 3.92The filter() command creates a data frame that consists solely of the observations of Toy Story, and summarize() computes the mean rating.

Pipelines can be used with any R function, not just dplyr verbs. One handy trick, especially if your data is not a tibble, is to pipe into head.

Find all movies which some user rated 5.

## # A tibble: 5 x 1

## title

## <chr>

## 1 Seven (a.k.a. Se7en) (1995)

## 2 Usual Suspects, The (1995)

## 3 Bottle Rocket (1996)

## 4 Rob Roy (1995)

## 5 Canadian Bacon (1995)The filter picks out the 5 star movies, select picks only the title variable, distinct removes duplicate titles, and then head to shows only the first five distinct titles.

6.3.1 Group by and summarize

The MovieLens data has one observation for each user review. However, we are often interested in working with the movies themselves.

The tool to do this is the dplyr verb group_by, which groups data to perform tasks by groups. By itself, group_by has little effect - in fact,

the only visual indication that it has done anything is a line at the top of the tibble output noting that there are Groups.

Here is a simple example with the built-in chickwts data set, which gives the weights of chickens eating different types of feed supplement:

## # A tibble: 71 x 2

## # Groups: feed [6]

## weight feed

## <dbl> <fct>

## 1 179 horsebean

## 2 160 horsebean

## 3 136 horsebean

## 4 227 horsebean

## 5 217 horsebean

## 6 168 horsebean

## 7 108 horsebean

## 8 124 horsebean

## 9 143 horsebean

## 10 140 horsebean

## # … with 61 more rowsIn essentially all uses, we follow group_by with another operation on the groups, most commonly summarize:

## `summarise()` ungrouping output (override with `.groups` argument)## # A tibble: 6 x 2

## feed `mean(weight)`

## <fct> <dbl>

## 1 casein 324.

## 2 horsebean 160.

## 3 linseed 219.

## 4 meatmeal 277.

## 5 soybean 246.

## 6 sunflower 329.This produced a new tibble with one row for each group, and created the new variable with the awkward name mean(weight) which records the mean weight for each group. We could give that variable an easier name to type by assigning it as part of the summarize operation: summarize(mw = mean(weight)). Note that dplyr helpfully tells us that the data frame is no longer grouped after we summarize it. We will hide this message in the rest of the chapter.

Here is another example, showing that we can produce multiple new summary variables. It also illustrates the special function n(), which gives the number of rows in each group. The chickwts experiment had different numbers of chickens in each group:

## # A tibble: 6 x 3

## feed mw count

## <fct> <dbl> <int>

## 1 casein 324. 12

## 2 horsebean 160. 10

## 3 linseed 219. 12

## 4 meatmeal 277. 11

## 5 soybean 246. 14

## 6 sunflower 329. 12To remove groups from a tibble, use the ungroup function. See Example 6.6 for a reason this might be needed.

6.4 The power of dplyr

With a small collection of verbs and the pipe operator, you are well equipped to perform complicated data analysis. This section shows some of the techniques you can use to learn answers from data.

Example Create a data frame consisting of the observations associated with movies that have an average rating of 5 stars. That is, each rating of the movie was 5 stars.

In order to do this, we will use the group_by() function to find the mean rating of each movie.

## # A tibble: 9,719 x 2

## title rating

## <chr> <dbl>

## 1 ¡Three Amigos! (1986) 3.13

## 2 ...All the Marbles (1981) 2

## 3 ...And Justice for All (1979) 3.17

## 4 '71 (2014) 4

## 5 'burbs, The (1989) 3.18

## 6 'Hellboy': The Seeds of Creation (2004) 4

## 7 'night Mother (1986) 3

## 8 'Round Midnight (1986) 3.5

## 9 'Salem's Lot (2004) 5

## 10 'Til There Was You (1997) 4

## # … with 9,709 more rowsNow, we could sort those in decreasing order to see ones that have a mean rating of 5.

## # A tibble: 9,719 x 2

## title rating

## <chr> <dbl>

## 1 'Salem's Lot (2004) 5

## 2 12 Angry Men (1997) 5

## 3 12 Chairs (1976) 5

## 4 20 Million Miles to Earth (1957) 5

## 5 61* (2001) 5

## 6 7 Faces of Dr. Lao (1964) 5

## 7 9/11 (2002) 5

## 8 A Detective Story (2003) 5

## 9 A Flintstones Christmas Carol (1994) 5

## 10 A Perfect Day (2015) 5

## # … with 9,709 more rowsNow, we can filter out those whose mean rating is 5. Note, it is not necessary to sort first.

## # A tibble: 296 x 2

## title rating

## <chr> <dbl>

## 1 'Salem's Lot (2004) 5

## 2 12 Angry Men (1997) 5

## 3 12 Chairs (1976) 5

## 4 20 Million Miles to Earth (1957) 5

## 5 61* (2001) 5

## 6 7 Faces of Dr. Lao (1964) 5

## 7 9/11 (2002) 5

## 8 A Detective Story (2003) 5

## 9 A Flintstones Christmas Carol (1994) 5

## 10 A Perfect Day (2015) 5

## # … with 286 more rowsAnd we see that there are 296 movies which have mean rating of 5.

Find the lowest rated movie.

## # A tibble: 9,719 x 2

## title mr

## <chr> <dbl>

## 1 "3 dev adam (Three Giant Men) (1973) " 0.5

## 2 "3 Ninjas Knuckle Up (1995)" 0.5

## 3 "Aloha (2015)" 0.5

## 4 "Alone in the Dark (2005)" 0.5

## 5 "Amer (2009)" 0.5

## 6 "Anaconda: The Offspring (2008)" 0.5

## 7 "Are We There Yet? (2005)" 0.5

## 8 "Arthur Christmas (2011)" 0.5

## 9 "Baby Boy (2001)" 0.5

## 10 "Bad Santa 2 (2016)" 0.5

## # … with 9,709 more rowsYikes! 0.5 out of 5 stars!

Which movie that received 5 stars has the most ratings?

movies %>%

group_by(title) %>%

summarize(mr = mean(rating), numRating = n()) %>%

arrange(desc(mr), desc(numRating))## # A tibble: 9,719 x 3

## title mr numRating

## <chr> <dbl> <int>

## 1 Belle époque (1992) 5 2

## 2 Come and See (Idi i smotri) (1985) 5 2

## 3 Enter the Void (2009) 5 2

## 4 Heidi Fleiss: Hollywood Madam (1995) 5 2

## 5 Jonah Who Will Be 25 in the Year 2000 (Jonas qui aur… 5 2

## 6 Lamerica (1994) 5 2

## 7 Lesson Faust (1994) 5 2

## 8 'Salem's Lot (2004) 5 1

## 9 12 Angry Men (1997) 5 1

## 10 12 Chairs (1976) 5 1

## # … with 9,709 more rowsThere are seven movies that have a perfect mean rating of 5 and have been rated twice. No movie with a perfect mean rating has been rated three or more times.

Out of movies with a lot of ratings, which has the highest rating? Well, we need to decide what a lot of ratings means. Let’s see how many ratings some of the movies had.

## # A tibble: 9,719 x 2

## title count

## <chr> <int>

## 1 Forrest Gump (1994) 329

## 2 Shawshank Redemption, The (1994) 317

## 3 Pulp Fiction (1994) 307

## 4 Silence of the Lambs, The (1991) 279

## 5 Matrix, The (1999) 278

## 6 Star Wars: Episode IV - A New Hope (1977) 251

## 7 Jurassic Park (1993) 238

## 8 Braveheart (1995) 237

## 9 Terminator 2: Judgment Day (1991) 224

## 10 Schindler's List (1993) 220

## # … with 9,709 more rowsIf we want a list of the 5 most rated movies, we could do:

## # A tibble: 5 x 2

## title count

## <chr> <int>

## 1 Forrest Gump (1994) 329

## 2 Shawshank Redemption, The (1994) 317

## 3 Pulp Fiction (1994) 307

## 4 Silence of the Lambs, The (1991) 279

## 5 Matrix, The (1999) 278So, it seems like 125 ratings could classify as “a lot”. Let’s see which movie with at least 125 ratings has the highest mean rating.

movies %>%

group_by(title) %>%

summarize(count = n(), meanRating = mean(rating)) %>%

filter(count > 125) %>%

arrange(desc(meanRating))## # A tibble: 77 x 3

## title count meanRating

## <chr> <int> <dbl>

## 1 Shawshank Redemption, The (1994) 317 4.43

## 2 Godfather, The (1972) 192 4.29

## 3 Fight Club (1999) 218 4.27

## 4 Godfather: Part II, The (1974) 129 4.26

## 5 Goodfellas (1990) 126 4.25

## 6 Dark Knight, The (2008) 149 4.24

## 7 Usual Suspects, The (1995) 204 4.24

## 8 Princess Bride, The (1987) 142 4.23

## 9 Star Wars: Episode IV - A New Hope (1977) 251 4.23

## 10 Schindler's List (1993) 220 4.22

## # … with 67 more rowsI have to say, that’s not a bad list of movies. If we want to see all of the movies in this list, we can pipe to print, and specify the number of movies we wish to see. (If you definitely want to see all of the movies, you can pipe to print(n = nrow(.)))

movies %>%

group_by(title) %>%

summarize(count = n(), meanRating = mean(rating)) %>%

filter(count > 125) %>%

arrange(desc(meanRating)) %>%

select(title, meanRating) %>%

print(n = 40)## # A tibble: 77 x 2

## title meanRating

## <chr> <dbl>

## 1 Shawshank Redemption, The (1994) 4.43

## 2 Godfather, The (1972) 4.29

## 3 Fight Club (1999) 4.27

## 4 Godfather: Part II, The (1974) 4.26

## 5 Goodfellas (1990) 4.25

## 6 Dark Knight, The (2008) 4.24

## 7 Usual Suspects, The (1995) 4.24

## 8 Princess Bride, The (1987) 4.23

## 9 Star Wars: Episode IV - A New Hope (1977) 4.23

## 10 Schindler's List (1993) 4.22

## 11 American History X (1998) 4.22

## 12 Star Wars: Episode V - The Empire Strikes Back (1980) 4.22

## 13 Raiders of the Lost Ark (Indiana Jones and the Raiders of… 4.21

## 14 One Flew Over the Cuckoo's Nest (1975) 4.20

## 15 Reservoir Dogs (1992) 4.20

## 16 Pulp Fiction (1994) 4.20

## 17 Matrix, The (1999) 4.19

## 18 Forrest Gump (1994) 4.16

## 19 Monty Python and the Holy Grail (1975) 4.16

## 20 Silence of the Lambs, The (1991) 4.16

## 21 Eternal Sunshine of the Spotless Mind (2004) 4.16

## 22 Saving Private Ryan (1998) 4.15

## 23 Star Wars: Episode VI - Return of the Jedi (1983) 4.14

## 24 Memento (2000) 4.12

## 25 Lord of the Rings: The Return of the King, The (2003) 4.12

## 26 Fargo (1996) 4.12

## 27 Lord of the Rings: The Fellowship of the Ring, The (2001) 4.11

## 28 Good Will Hunting (1997) 4.08

## 29 Inception (2010) 4.07

## 30 American Beauty (1999) 4.06

## 31 Indiana Jones and the Last Crusade (1989) 4.05

## 32 Back to the Future (1985) 4.04

## 33 Braveheart (1995) 4.03

## 34 Lord of the Rings: The Two Towers, The (2002) 4.02

## 35 Léon: The Professional (a.k.a. The Professional) (Léon) (… 4.02

## 36 Fugitive, The (1993) 3.99

## 37 Twelve Monkeys (a.k.a. 12 Monkeys) (1995) 3.98

## 38 Seven (a.k.a. Se7en) (1995) 3.98

## 39 Terminator 2: Judgment Day (1991) 3.97

## 40 Alien (1979) 3.97

## # … with 37 more rowsYou just got your summer movie watching list. You’re welcome.

Use dplyr to determine:

- Which movie was rated the most?

- The 10 worst rated movies that were rated at least 100 times.

- Which genre had the highest rating?

Continue reading to check your work.

- Forrest Gump was rated 329 times, the most of any movie.

- Of movies rated at least 100 times, the list of the worst starts with Waterworld, Batman Forever, and Home Alone.

- There are many genres tied for highest rating, with a perfect 5 rating and only 1 user. Your data science instincts should tell you to restrict to movies rated a lot. Almost any reasonable restriction will show you that “Crime|Drama” movies are the highest rated.

When group_by is followed by summarize, the resulting output has one row per group. It is also reasonable to follow group_by with mutate, which can be used to calculate a value for each row based off of data from the groups.

In this case, you can add ungroup to the pipeline to remove the grouping before using the data further.

Find the “worst opinions” in the MovieLens data set. We are interested in finding the movie that received the most ratings while also receiving exactly one five star rating, and in finding the user who had that bad take.

movies %>%

group_by(title) %>%

mutate(

num5 = sum(rating == 5),

numRating = n()

) %>%

ungroup() %>%

filter(rating == 5, num5 == 1) %>%

select(userId, title, numRating) %>%

arrange(desc(numRating)) %>%

head(n = 5)## # A tibble: 5 x 3

## userId title numRating

## <int> <chr> <int>

## 1 246 Addams Family Values (1993) 84

## 2 313 Charlie's Angels (2000) 72

## 3 587 Lost World: Jurassic Park, The (1997) 67

## 4 43 Coneheads (1993) 63

## 5 45 Signs (2002) 63User number 246 has the worst opinion in the data set, as none of 83 other people thought Addams Family Values was all that good.

6.5 Working with character strings

In this section, we use the stringr package to do some basic string manipulation on the movies data set. As far as R is concerned, strings are char variable types, for example:

## chr "Darrin Speegle"Recall that str returns the structure of the variable, together with the contents if it is not too long.

Even finding out how long a string is can be challenging for newcomers, because length gives the number of strings in the variable my_name:

## [1] 1One might very reasonably be interested in the number of spaces (a proxy for the number of words), the capital letters (a person’s initials),

or in sorting a vector of characters containing names by the last name of the people. All of these tasks (and more) are made easier using the stringr package.

We will use the functions str_length, str_count, str_remove and str_extract, str_detect and str_split.

The function str_length accepts one argument, a string, which can either be a single string or a vector of strings. It returns the length(s) of the string(s). For example,

## [1] 14If we had a vector of two names, then it works like this:

## [1] 14 11Next, we consider the function str_count, which takes two parameters, string and pattern. The first is a string, and the second is a regular expression that you use to indicate the pattern that you are counting. To count the number of spaces, we will pass a single character to the pattern, namely the space character.

## [1] 1 1There is one space in each of the two strings in the variable our_names. Regular expressions are very useful and powerful.

If you end up doing much work with text data, you will not regret learning more about them. For example, if you want to match lower case vowels, you could use

## [1] 5 3Note the use of the grouping symbols [ and ]. If we want to match any lower case letter, we use [a-z], any upper case letter is [A-Z], and any digit is [0-9].

So, to count the number of upper case letters in the strings, we would use

## [1] 2 2Next, we examine str_extract and its sibling function str_extract_all.

Suppose we want to extract the initials of the people in the vector our_names.

Our first thought might be to use str_extract, which takes two parameters, string which contains the string(s) we wish to extract from, and pattern which is the pattern we wish to match, in order to extract.

## [1] "D" "B"Note that this only extracts the first occurrence of the pattern in each string. To get all of them, we use str_extract_all

## [[1]]

## [1] "D" "S"

##

## [[2]]

## [1] "B" "C"Note the format of the output. It is a list of character vectors. Let’s check using str:

## List of 2

## $ : chr [1:2] "D" "S"

## $ : chr [1:2] "B" "C"This is because str_extract_all doesn’t know how many matches there are going to be in each string, and because it doesn’t know that we are thinking of these as initials and want them all in the same string.

That is, we would like a vector that looks like c("DS", "BC").

After learning about sapply we could apply str_flatten to each element of the list, but that seems like a long road to walk for this purpose.

Perhaps easier is to do the opposite and remove all of the things that don’t match capital letters!

To do this, we use str_remove_all, which again takes two arguments, string and pattern to remove.

We need to know that regular expressions can be told to not mach characters by including ^ inside the brackets:

## [1] "DS" "BC"The last two functions that we are looking at are str_detect and str_split.

These are most useful within a data analysis flow. The function str_detect accepts string and pattern and returns TRUE if the pattern is detected, and FALSE if the pattern is not detected.

For example, we could look for the pattern an in our_names:

## [1] FALSE TRUENote that if we had put "an" inside a bracket like this "[an]", then str_detect would have looked for a pattern consisting of either an a or an n, and we would have received two TRUE values.

Finally, suppose we want to split the strings into first name and last name.

We use str_split or its sibling function str_split_fixed. The function str_split takes two arguments, a string and a pattern on which to split the string.

Every time the function sees the pattern, it splits off a piece of the string.

For example,

## [[1]]

## [1] "Darrin" "Speegle"

##

## [[2]]

## [1] "Bryan" "Clair"Note that once again, the function returns a list of vectors of strings.

If we know that we only want to split into at most 3 groups, say, then we can use str_split_fixed to indicate that.

The first two arguments are the same, but str_split_fixed has a third argument, n which is the number of strings that we want to split each string into.

## [,1] [,2] [,3]

## [1,] "Darrin" "Speegle" ""

## [2,] "Bryan" "Clair" ""Let’s apply string manipulation to understand the Genres variable of the fosdata::movies data set.

What are the best comedies? We look for the highest rated movies that have been rated at least 50 times and include “Comedy” in the genre list.

movies %>%

filter(str_detect(genres, "Comedy")) %>%

group_by(title) %>%

summarize(rating = mean(rating), count = n()) %>%

filter(count >= 50) %>%

arrange(desc(rating)) %>%

print(n = 10)## # A tibble: 181 x 3

## title rating count

## <chr> <dbl> <int>

## 1 Dr. Strangelove or: How I Learned to Stop Worrying and … 4.27 97

## 2 Princess Bride, The (1987) 4.23 142

## 3 Pulp Fiction (1994) 4.20 307

## 4 Amelie (Fabuleux destin d'Amélie Poulain, Le) (2001) 4.18 120

## 5 Forrest Gump (1994) 4.16 329

## 6 Monty Python and the Holy Grail (1975) 4.16 136

## 7 Snatch (2000) 4.16 93

## 8 Life Is Beautiful (La Vita è bella) (1997) 4.15 88

## 9 Fargo (1996) 4.12 181

## 10 Toy Story 3 (2010) 4.11 55

## # … with 171 more rowsUse fosdata::movies to find the highest rated movies of 1999.

The challenge here is that the year that the movie was released is hidden at the end of the title of the movie.

Let’s pull out the year of release and move it into its own variable. We use regular expressions to do this.

The character $ inside a regular expression means that the pattern occurs at the end of a string.

Let’s extract 4 digits, followed by a right parenthesis, at the end of a string.

The pattern for this is “[0-9]{4}\)$”.

The {4} indicates that we are matching the digit pattern four times, we have to escape the right parenthesis using \\ because ) is a reserved character in regular expressions,

and the $ indicates that we are anchoring this to the end of the string. Let’s test it out:

## [1] "1995)" "1995)" "1995)" "1995)" "1995)" "1996)"Hmmm, even better would be to get rid of the right parenthesis. We can use the look-ahead to only pull out 4 digits if they are followed by a right parenthesis and then the end of string.

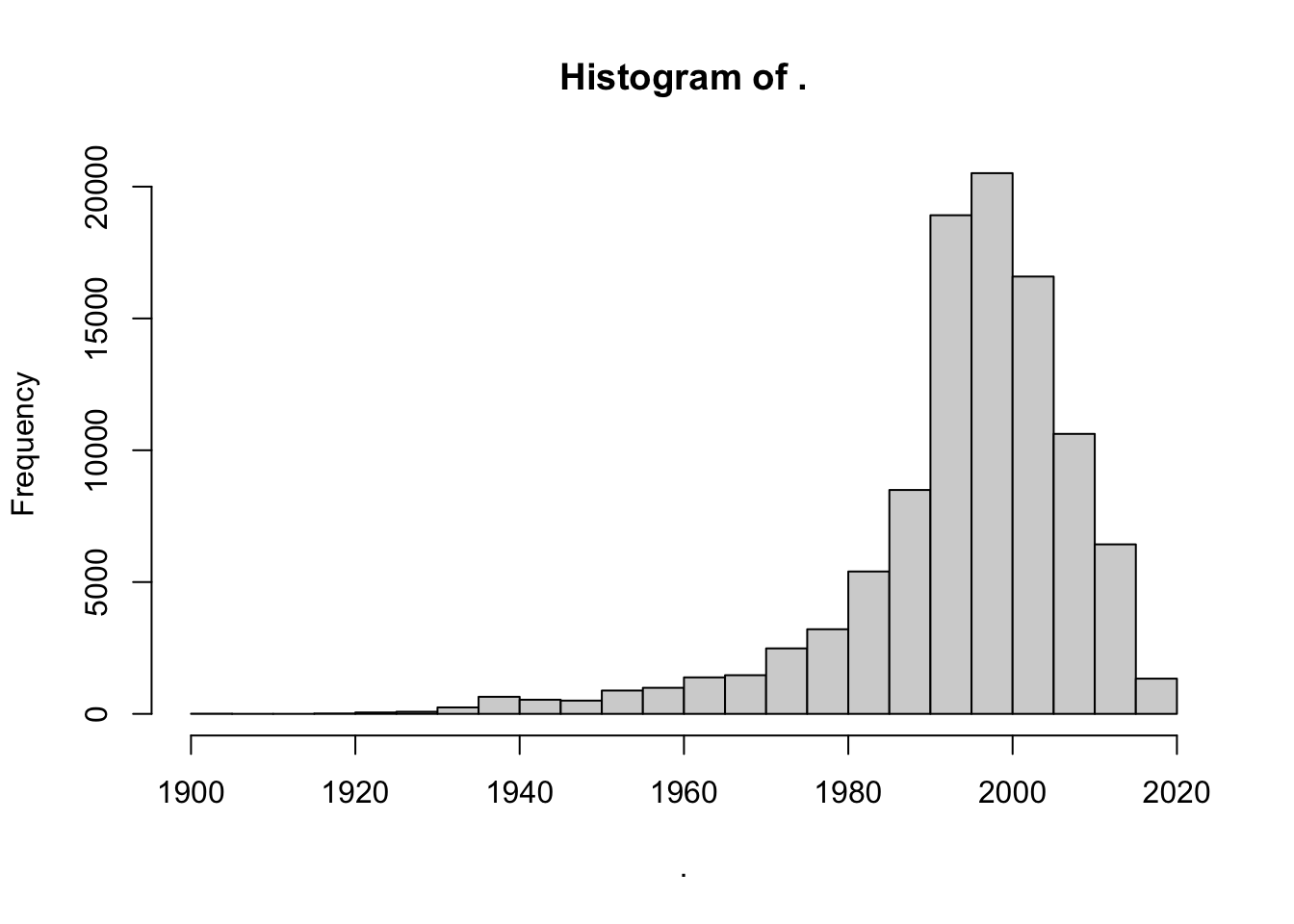

## [1] "1995" "1995" "1995" "1995" "1995" "1996"Sweet! Let’s convert to numeric and do a histogram, just to make sure no weird stuff snuck in.

Looks pretty good. Let’s create a new variable called year that contains the year of release of the movie.

movies <- mutate(movies, year = str_extract(title, "[0-9]{4}(?=\\)$)") %>%

as.numeric())

summary(movies$year)## Min. 1st Qu. Median Mean 3rd Qu. Max. NA's

## 1902 1990 1997 1994 2003 2018 30Whoops. We have 30 NA years. Let’s take a look and see what happened.

## # A tibble: 30 x 1

## title

## <chr>

## 1 "Black Mirror"

## 2 "Runaway Brain (1995) "

## 3 "The Adventures of Sherlock Holmes and Doctor Watson"

## 4 "Maria Bamford: Old Baby"

## 5 "Generation Iron 2"

## 6 "Ready Player One"

## 7 "Babylon 5"

## 8 "Ready Player One"

## 9 "Nocturnal Animals"

## 10 "Guilty of Romance (Koi no tsumi) (2011) "

## # … with 20 more rowsAha! Some of the movies don’t have years, others have an extra space after the end parentheses. We can handle the extra space with \\s*, which matches zero or more spaces.

pattern <- "[0-9]{4}(?=\\)\\s*$)"

movies <- mutate(movies,

year = str_extract(title, pattern) %>% as.numeric()

)

summary(movies$year)## Min. 1st Qu. Median Mean 3rd Qu. Max. NA's

## 1902 1990 1997 1994 2003 2018 17Finally, what were the five highest rated movies of 1999 that had at least 50 ratings?

movies %>%

filter(year == 1999) %>%

group_by(title) %>%

summarize(mean = mean(rating), count = n()) %>%

filter(count >= 50) %>%

arrange(desc(mean)) %>%

print(n = 5)## # A tibble: 23 x 3

## title mean count

## <chr> <dbl> <int>

## 1 Fight Club (1999) 4.27 218

## 2 Matrix, The (1999) 4.19 278

## 3 Green Mile, The (1999) 4.15 111

## 4 Office Space (1999) 4.09 94

## 5 American Beauty (1999) 4.06 204

## # … with 18 more rowsOne final common usage of regular expressions is within select. If your data frame has a lot of variables, and you want to select out some that follow a certain pattern, then knowing regular expressions can be very helpful.

Consider the accelerometer data in the fosdata package. This data set will be described in more detail in Chapter 11.

## [1] "participant"

## [2] "machine"

## [3] "set"

## [4] "contraction_mode"

## [5] "time_video_rater_cv_ms"

## [6] "time_video_rater_dg_ms"

## [7] "time_smartphone_1_ms"

## [8] "time_smartphone_2_ms"

## [9] "video_rater_mean_ms"

## [10] "smartphones_mean_ms"

## [11] "relative_difference"

## [12] "difference_video_smartphone_ms"

## [13] "mean_video_smartphone_ms"

## [14] "contraction_mode_levels"

## [15] "difference_video_raters_ms"

## [16] "difference_smartphones_ms"

## [17] "video_smartphone_difference_outlier"

## [18] "rater_difference_outlier"

## [19] "smartphone_difference_outlier"

## [20] "normalized_error_smartphone"

## [21] "participant_age_levels"

## [22] "participant_age_years"

## [23] "participant_height_cm"

## [24] "participant_weight_kg"

## [25] "participant_gender"Look at all of those variables! Most of the data sets that we see in this book have been condensed, but it is not at all uncommon for experimenters to collect data on 30-200 values. Let’s suppose you wanted to create a new data frame that had all of the time measurements in it. Those are the variables whose names end with ms. We can do this by combining select with the regular expression ms$, which matches the letters ms and the end of the string.

## # A tibble: 12,245 x 10

## time_video_rate… time_video_rate… time_smartphone… time_smartphone…

## <dbl> <dbl> <dbl> <dbl>

## 1 1340 1360 1650 1700

## 2 1160 1180 1350 1350

## 3 1220 1240 1400 1350

## 4 1260 1280 1400 1350

## 5 1560 1180 1350 1300

## # … with 12,240 more rows, and 6 more variables:

## # video_rater_mean_ms <dbl>, smartphones_mean_ms <dbl>,

## # difference_video_smartphone_ms <dbl>,

## # mean_video_smartphone_ms <dbl>, difference_video_raters_ms <dbl>,

## # difference_smartphones_ms <dbl>To build a data frame that includes both variables that start with smartphone or end with ms, use the regular expression | character for “or”.

## # A tibble: 12,245 x 11

## time_video_rate… time_video_rate… time_smartphone… time_smartphone…

## <dbl> <dbl> <dbl> <dbl>

## 1 1340 1360 1650 1700

## 2 1160 1180 1350 1350

## 3 1220 1240 1400 1350

## 4 1260 1280 1400 1350

## 5 1560 1180 1350 1300

## # … with 12,240 more rows, and 7 more variables:

## # video_rater_mean_ms <dbl>, smartphones_mean_ms <dbl>,

## # difference_video_smartphone_ms <dbl>,

## # mean_video_smartphone_ms <dbl>, difference_video_raters_ms <dbl>,

## # difference_smartphones_ms <dbl>,

## # smartphone_difference_outlier <lgl>6.6 The structure of data

6.6.1 Tidy data: pivoting

The tidyverse is designed for tidy data, and a key feature of tidy data is that all data should be stored in a rectangular array, with

each row an observation and each column a variable. In particular, no information should be stored in variable names. As an example, consider the

built in data set WorldPhones which gives the number of telephones in each region of the world, back in the day when telephones were still gaining popularity as a device for making phone calls.

The WorldPhones data is stored as a matrix with row names, so we convert it to a tibble called phones and preserve the row names in a new variable called Year. We also clean up the names into a standard format using janitor::clean_names.

## # A tibble: 7 x 8

## year n_amer europe asia s_amer oceania africa mid_amer

## <chr> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl>

## 1 1951 45939 21574 2876 1815 1646 89 555

## 2 1956 60423 29990 4708 2568 2366 1411 733

## 3 1957 64721 32510 5230 2695 2526 1546 773

## 4 1958 68484 35218 6662 2845 2691 1663 836

## 5 1959 71799 37598 6856 3000 2868 1769 911

## 6 1960 76036 40341 8220 3145 3054 1905 1008

## 7 1961 79831 43173 9053 3338 3224 2005 1076Notice that the column names are regions of the world, so that there is useful information stored in those names.

Every entry in this data set gives the value for a year and a region, so the tidy format should instead have three variables: year, region, and telephones. Making this change will cause this data set to become much longer. Instead of 7 rows and 7 columns, we will have 49 rows, one for each of the \(7\times7\) year and region combinations.

The tool to make this change is the pivot_longer function from the tidyr package (part of the tidyverse):

## # A tibble: 49 x 3

## year region telephones

## <chr> <chr> <dbl>

## 1 1951 n_amer 45939

## 2 1951 europe 21574

## 3 1951 asia 2876

## 4 1951 s_amer 1815

## 5 1951 oceania 1646

## 6 1951 africa 89

## 7 1951 mid_amer 555

## 8 1956 n_amer 60423

## 9 1956 europe 29990

## 10 1956 asia 4708

## # … with 39 more rowsThis function used four arguments. The first was the data frame we wanted to pivot, in this case phones. Second, we specified which columns to use, with the expression cols = -year, which meant “all columns except year”. Finally, we told the function that the names of the columns should become a new variable called “region”, and the values in those columns should become a new variable called “telephones”.

As a more complex example, let’s look at the billboard data set provided with the tidyr package. This contains the weekly Billboard rankings for each song that entered the top 100 during the year 2000. Observe that each row is a song (track) and the track’s place on the charts is stored in up to 76 columns named wk1 through wk76. There are many NA in the data since none of these tracks were ranked for 76 consecutive weeks.

## # A tibble: 5 x 79

## artist track date.entered wk1 wk2 wk3 wk4 wk5 wk6 wk7

## <chr> <chr> <date> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl>

## 1 2 Pac Baby… 2000-02-26 87 82 72 77 87 94 99

## 2 2Ge+h… The … 2000-09-02 91 87 92 NA NA NA NA

## 3 3 Doo… Kryp… 2000-04-08 81 70 68 67 66 57 54

## 4 3 Doo… Loser 2000-10-21 76 76 72 69 67 65 55

## 5 504 B… Wobb… 2000-04-15 57 34 25 17 17 31 36

## # … with 69 more variables: wk8 <dbl>, wk9 <dbl>, wk10 <dbl>,

## # wk11 <dbl>, wk12 <dbl>, wk13 <dbl>, wk14 <dbl>, wk15 <dbl>,

## # wk16 <dbl>, wk17 <dbl>, wk18 <dbl>, wk19 <dbl>, wk20 <dbl>,

## # wk21 <dbl>, wk22 <dbl>, wk23 <dbl>, wk24 <dbl>, wk25 <dbl>,

## # wk26 <dbl>, wk27 <dbl>, wk28 <dbl>, wk29 <dbl>, wk30 <dbl>,

## # wk31 <dbl>, wk32 <dbl>, wk33 <dbl>, wk34 <dbl>, wk35 <dbl>,

## # wk36 <dbl>, wk37 <dbl>, wk38 <dbl>, wk39 <dbl>, wk40 <dbl>,

## # wk41 <dbl>, wk42 <dbl>, wk43 <dbl>, wk44 <dbl>, wk45 <dbl>,

## # wk46 <dbl>, wk47 <dbl>, wk48 <dbl>, wk49 <dbl>, wk50 <dbl>,

## # wk51 <dbl>, wk52 <dbl>, wk53 <dbl>, wk54 <dbl>, wk55 <dbl>,

## # wk56 <dbl>, wk57 <dbl>, wk58 <dbl>, wk59 <dbl>, wk60 <dbl>,

## # wk61 <dbl>, wk62 <dbl>, wk63 <dbl>, wk64 <dbl>, wk65 <dbl>,

## # wk66 <lgl>, wk67 <lgl>, wk68 <lgl>, wk69 <lgl>, wk70 <lgl>,

## # wk71 <lgl>, wk72 <lgl>, wk73 <lgl>, wk74 <lgl>, wk75 <lgl>,

## # wk76 <lgl>This data is not tidy, since the week column names contain information.

To do any sort of analysis on this data, the week needs to be a variable.

We use pivot_longer to replace all 76 week columns with two columns, one called “week” and another called “rank”:

long.bill <- billboard %>%

pivot_longer(

cols = wk1:wk76,

names_to = "week", values_to = "rank",

values_drop_na = TRUE

)

long.bill## # A tibble: 5,307 x 5

## artist track date.entered week rank

## <chr> <chr> <date> <chr> <dbl>

## 1 2 Pac Baby Don't Cry (Keep... 2000-02-26 wk1 87

## 2 2 Pac Baby Don't Cry (Keep... 2000-02-26 wk2 82

## 3 2 Pac Baby Don't Cry (Keep... 2000-02-26 wk3 72

## 4 2 Pac Baby Don't Cry (Keep... 2000-02-26 wk4 77

## 5 2 Pac Baby Don't Cry (Keep... 2000-02-26 wk5 87

## 6 2 Pac Baby Don't Cry (Keep... 2000-02-26 wk6 94

## 7 2 Pac Baby Don't Cry (Keep... 2000-02-26 wk7 99

## 8 2Ge+her The Hardest Part Of ... 2000-09-02 wk1 91

## 9 2Ge+her The Hardest Part Of ... 2000-09-02 wk2 87

## 10 2Ge+her The Hardest Part Of ... 2000-09-02 wk3 92

## # … with 5,297 more rowsThe values_drop_na = TRUE argument removes the NA values from the rank column while pivoting.

Which 2000 song spent the most weeks on the charts? This question would have been near impossible to answer without tidying the data. Now we can group by track and count the number of times it was ranked:

long.bill %>%

group_by(track) %>%

summarize(weeks_on_chart = n()) %>%

top_n(n = 1, wt = weeks_on_chart)## # A tibble: 1 x 2

## track weeks_on_chart

## <chr> <int>

## 1 Higher 57“Higher”, by Creed, entered the charts in 2000 and spent 57 weeks in the top 100 (though not consecutively!)

The tidyr package also provides a function pivot_wider which performs the opposite transformation to pivot_longer. You will need to provide a names_from value, which contains the variable name to get the column names from, and a values_from value or values, which gives the columns to get the cell values from. You often will also need to provide an id_cols which uniquely identifies each observation. Let’s see how to use it in an example.

Consider the babynames data set in the babynames package. This consists of all names of babies born in the USA from 1880 through 2017, together with the sex assigned to the baby, the number of babies of that sex given that name in that year, and the proportion of babies with that sex in that year that were given the name. Only names that were given to five or more babies are included.

## # A tibble: 6 x 5

## year sex name n prop

## <dbl> <chr> <chr> <int> <dbl>

## 1 1880 F Mary 7065 0.0724

## 2 1880 F Anna 2604 0.0267

## 3 1880 F Emma 2003 0.0205

## 4 1880 F Elizabeth 1939 0.0199

## 5 1880 F Minnie 1746 0.0179

## 6 1880 F Margaret 1578 0.0162Convert the babynames data from year 2000 into wide format, where each row is a name together with the number of male babies and female babies with that name. Note that we need to either select down to the variables of interest or we would need to provide the id columns.

babynames %>%

filter(year == 2000) %>%

pivot_wider(id_cols = name, names_from = sex, values_from = n)## # A tibble: 27,512 x 3

## name F M

## <chr> <int> <int>

## 1 Emily 25953 30

## 2 Hannah 23080 25

## 3 Madison 19967 138

## 4 Ashley 17997 82

## 5 Sarah 17697 26

## 6 Alexis 17629 2714

## 7 Samantha 17266 21

## 8 Jessica 15709 27

## 9 Elizabeth 15094 22

## 10 Taylor 15078 2853

## # … with 27,502 more rowsNot every name had both a male and a female entry. Those are listed as NA in the data frame. If we would prefer them to be 0, we can add values_fill = 0.

Now that we have the data in a nice format, let’s determine the most common name for which the number of male babies was within 200 of the number of female babies.

babynames %>%

filter(year == 2000) %>%

pivot_wider(

id_cols = name, names_from = sex,

values_from = n, values_fill = 0

) %>%

filter(M > F - 200, M < F + 200) %>%

mutate(total_names = F + M) %>%

top_n(5, total_names)## # A tibble: 5 x 4

## name F M total_names

## <chr> <int> <int> <int>

## 1 Peyton 1967 2001 3968

## 2 Skyler 1284 1472 2756

## 3 Jessie 719 533 1252

## 4 Sage 557 392 949

## 5 Justice 477 656 1133We see by this criteria, Peyton is the most common gender neutral name, followed by Skyler, Jessie, Justice and Sage.

6.6.2 Using join to merge data frames

In this section, we briefly introduce the join family of functions, by focusing on left_join.

The function left_join joins two data frames together into one.

The syntax is left_join(x, y, by = NULL), where x and y are data frames and by is a list of columns that you want to join the data frames by

(there are additional arguments that we won’t be using).

The way I remember what left_join does is that it adds the columns of y to the columns of x by matching on columns with the same names.

As an easy example, consider the band_members and band_instruments data sets,

both of which are included in the dplyr package.

## # A tibble: 3 x 2

## name band

## <chr> <chr>

## 1 Mick Stones

## 2 John Beatles

## 3 Paul Beatles## # A tibble: 3 x 2

## name plays

## <chr> <chr>

## 1 John guitar

## 2 Paul bass

## 3 Keith guitar## Joining, by = "name"## # A tibble: 3 x 3

## name band plays

## <chr> <chr> <chr>

## 1 Mick Stones <NA>

## 2 John Beatles guitar

## 3 Paul Beatles bassNow, what happens if Paul learns how to play the drums as well?

## # A tibble: 4 x 2

## name plays

## <chr> <chr>

## 1 John guitar

## 2 Paul bass

## 3 Keith guitar

## 4 Paul drums## Joining, by = "name"## # A tibble: 4 x 3

## name band plays

## <chr> <chr> <chr>

## 1 Mick Stones <NA>

## 2 John Beatles guitar

## 3 Paul Beatles bass

## 4 Paul Beatles drumsWe see that if there are multiple matches to name, then left_join makes multiple rows in the new data frame.

We also used left_join to transform the movies data set that we have been working with throughout this chapter.

The original files as downloaded from MovieLens were as follows.

movies <- read.csv("https://stat.slu.edu/~speegle/data/ml-latest-small/movies.csv")

ratings <-

read.csv("https://stat.slu.edu/~speegle/data/ml-latest-small/ratings.csv")The movies file contained the movie names and genres, while the ratings file contained the user ID, the rating, and the timestamp.

## movieId title

## 1 1 Toy Story (1995)

## 2 2 Jumanji (1995)

## 3 3 Grumpier Old Men (1995)

## 4 4 Waiting to Exhale (1995)

## 5 5 Father of the Bride Part II (1995)

## 6 6 Heat (1995)

## genres

## 1 Adventure|Animation|Children|Comedy|Fantasy

## 2 Adventure|Children|Fantasy

## 3 Comedy|Romance

## 4 Comedy|Drama|Romance

## 5 Comedy

## 6 Action|Crime|Thriller## userId movieId rating timestamp

## 1 1 1 4 964982703

## 2 1 3 4 964981247

## 3 1 6 4 964982224

## 4 1 47 5 964983815

## 5 1 50 5 964982931

## 6 1 70 3 964982400We combine the two data sets using left_join to get the one that we used throughout the chapter.

Good, now, when would you use this? Well, many times you have data from multiple sources that you want to combine into one data frame.

Consider the data sets pres_election available in the fosdata package, and unemp available in the mapproj package.

These data sets give the by county level presidential election results from 2000-2016, and the population and unemployment rate of all counties in the US.

Some packages, such as mapproj, require you to use a command like data("unemp")) to access data sets.

## Loading required package: mapsSuppose we want to create a new data frame that contains both the election results and the unemployment data. That is a job for left_join.

Because the two data frames didn’t have any column names in common, the join required by = to specify which columns to join by.

## year state state_po county FIPS office candidate

## 1 2000 Alabama AL Autauga 1001 President Al Gore

## 2 2000 Alabama AL Autauga 1001 President George W. Bush

## 3 2000 Alabama AL Autauga 1001 President Ralph Nader

## 4 2000 Alabama AL Autauga 1001 President Other

## 5 2000 Alabama AL Baldwin 1003 President Al Gore

## 6 2000 Alabama AL Baldwin 1003 President George W. Bush

## party candidatevotes totalvotes version pop unemp

## 1 democrat 4942 17208 20191203 23288 9.7

## 2 republican 11993 17208 20191203 23288 9.7

## 3 green 160 17208 20191203 23288 9.7

## 4 NA 113 17208 20191203 23288 9.7

## 5 democrat 13997 56480 20191203 81706 9.1

## 6 republican 40872 56480 20191203 81706 9.1Word to the wise: when dealing with spatial data that is organized by county, it is much easier to use the fips code than the county name, because there are variances in capitalization and other issues that come up when trying to use county names.

6.7 The apply family

The focus of this book is on using dplyr tools when manipulating data sets.

However, there will be times when it will be useful to know the base R tools for doing some of the same tasks.

At a minimum, it can be useful to know these tools when reading other people’s code! The apply family consists of quite a few functions,

including apply, sapply, lapply, vapply, tapply, mapply and even replicate! While tapply and its variants might be the closest in spirit to the way we were using dplyr in this chapter, we will focus on apply and sapply.

All of the functions in the apply family are implicit loops, like replicate.

The typical usage of apply is apply(X, MARGIN, FUN). The function apply applies the function FUN to either the rows or columns of X,

depending on whether MARGIN is 1 (rows) or 2 (columns). So, the argument X is typically a matrix or data frame, MARGIN is either 1 or 2, and FUN is a function, such as sum or mean.

Consider the data set USJudgeRatings, which contains the ratings of US judges by lawyers on various facets.

## CONT INTG DMNR DILG CFMG DECI PREP FAMI ORAL WRIT PHYS

## AARONSON,L.H. 5.7 7.9 7.7 7.3 7.1 7.4 7.1 7.1 7.1 7.0 8.3

## ALEXANDER,J.M. 6.8 8.9 8.8 8.5 7.8 8.1 8.0 8.0 7.8 7.9 8.5

## ARMENTANO,A.J. 7.2 8.1 7.8 7.8 7.5 7.6 7.5 7.5 7.3 7.4 7.9

## BERDON,R.I. 6.8 8.8 8.5 8.8 8.3 8.5 8.7 8.7 8.4 8.5 8.8

## BRACKEN,J.J. 7.3 6.4 4.3 6.5 6.0 6.2 5.7 5.7 5.1 5.3 5.5

## BURNS,E.B. 6.2 8.8 8.7 8.5 7.9 8.0 8.1 8.0 8.0 8.0 8.6

## RTEN

## AARONSON,L.H. 7.8

## ALEXANDER,J.M. 8.7

## ARMENTANO,A.J. 7.8

## BERDON,R.I. 8.7

## BRACKEN,J.J. 4.8

## BURNS,E.B. 8.6Which judge had the highest mean rating? Which rating scale had the highest mean across all judges?

Using apply across rows computes the mean rating for each judge.

## AARONSON,L.H. ALEXANDER,J.M. ARMENTANO,A.J. BERDON,R.I.

## 7.291667 8.150000 7.616667 8.458333

## BRACKEN,J.J. BURNS,E.B.

## 5.733333 8.116667To pull the highest mean rating:

## [1] 8.858333## CALLAHAN,R.J.

## 7We see that RJ Callahan had the highest rating of 8.858333.

Using apply across columns computes the mean rating of all of the judges in each category.

## CONT INTG DMNR DILG CFMG DECI PREP FAMI

## 7.437209 8.020930 7.516279 7.693023 7.479070 7.565116 7.467442 7.488372

## ORAL WRIT PHYS RTEN

## 7.293023 7.383721 7.934884 7.602326Judges scored highest on the Integrity scale.

The sapply function has as its typical usage sapply(X, FUN), where X is a vector, and FUN is the function that we wish to act on each element of X.

Unfortunately, sapply is not type consistent in the way it simplifies results, sometimes returning a vector, sometimes a matrix. This gives sapply a bad reputation, but it is still useful for exploratory analysis.

Approximate the sd of a \(t\) random variable with 5, 10, 15, 20, 25 and 30 degrees of freedom.

To do this for 5 df, we simulate 10000 random values of \(t\) and take the standard deviation:

## [1] 1.328793To repeat this computation for the other degrees of freedom, turn the simulation into a function

and use sapply to feed it each of the df values.

## [1] 1.260566 1.114039 1.082799 1.049515 1.035242 1.035228sapply took each element of the vector X, applied the function to it, and returned the results as a vector.

The symbol n in function(n) is a dummy variable, and can be replaced by any legal R variable name.

The function replicate is a “convenience wrapper” for a common usage of sapply, so that replicate(100, {expr}) is equivalent to sapply(1:100, function(x) {expr}), where in this case, the function inside of sapply will have no dependence on x.

Vignette: A dplyr murder mystery

There has been a murder in dplyr City! You have lost your notes, but you remember that the murder took place on January 15, 2018.

All of the clues that you will need to solve the murder are contained in the dplyrmurdermystery package once you load it into your environment.

The dplyr murder mystery is a whodunnit, where you use your dplyr skillz to analyze data sets that lead to the solution of a murder mystery. The original caper of this sort was the Command Line Murder Mystery,31 which was recast as SQL Murder Mystery.32

We have created an R package that is a small modification of the SQL Murder Mystery for you to enjoy. You can download the package via

Once you install the package, you can load the data into your environment by typing

This loads quite a bit of data into your environment, so you may want to make sure you are starting with a clean environment before you do it.

Vignette: Data and gender

In most studies with human subjects, the investigators collect demographic information on their subjects to control for lurking variables that may interact with the variables under investigation. One of the most commonly collected demographic variables is gender. In this vignette, we discuss current best practices for collecting gender information in surveys and studies.

Why not male or female?

The term transgender refers to people whose gender expression defies social expectations. More narrowly, the term transgender refers to people whose gender identity or gender expression differs from expectations associated with the sex assigned at birth33.

Approximately 1 in 250 adults in the U.S. identify as transgender, with that proportion expected to rise as survey questions improve and stigma decreases.34 For teenagers, this proportion may be as high as 0.7%.35

The traditional “Male or Female?” survey question fails to measure the substantial transgender population, and the under- or non-representation of transgender individuals is a barrier to understanding social determinants and health disparities faced by this population.

How to collect gender information

Best practices36 in collecting gender information are to use a two-step approach, with the following questions:

- Assigned sex at birth: What sex were you assigned at birth, on your original birth certificate?

- Male

- Female

- Current gender identity: How do you describe yourself? (check one)

- Male

- Female

- Transgender

- Do not identify as female, male, or transgender

These questions serve as guides. Question 1 has broad agreement, while there is still discussion and research being done as to what the exact wording of Question 2 should be. It is also important to talk to people who have expertise in or familiarity with the specific population you wish to sample from, to see whether there are any adjustments to terminology or questions that should be made for that population.

In general, questions related to sex and gender are considered “sensitive”. Respondents may be reluctant to answer sensitive questions or may answer inaccurately. For these questions, privacy matters. When possible, place sensitive questions on a self-administered survey. Placing gender and sexual orientation questions at the start of a survey may also decrease privacy, since those first questions are encountered at the same time for all subjects and are more visible on paper forms.

How to incorporate gender in data analysis

The relatively small samples of transgender populations create challenges for analysis. One type of error is called a specificity error, and occurs when subjects mistakenly indicate themselves as transgender or another gender minority. The transgender population is less than 1% of the overall population. So, if even one in one hundred subjects misclassify themselves, then the subjects identifying as transgender in the sample will be a mix of half transgender individuals and half misclassified individuals. The best way to combat specificity errors is with carefully worded questions and prepared language to explain the options if asked.

Small samples lead to large margin of error, and make it difficult to detect statistically significant differences within groups. For an individual survey, this may prevent analysis of fine-grained gender information. Aggregating data over time, space, and across surveys can allow analysis of gender minority groups.

Example

The data fosdata::gender was collected by Sell, Goldberg, Conron37 to determine if it is feasible to sample from rare and dispersed populations using the online service “Google Android Panel”.

They use the two-step approach to gender, with a sex-at-birth question and a gender identity question that is broadly worded and which allows respondents

to select multiple options. This multiple option question is coded as seven different T/F variables beginning with gender_.

## 'data.frame': 20305 obs. of 10 variables:

## $ gender_male : logi FALSE FALSE FALSE FALSE FALSE TRUE ...

## $ gender_female : logi TRUE TRUE TRUE TRUE TRUE FALSE ...

## $ gender_trans : logi FALSE FALSE FALSE TRUE FALSE FALSE ...

## $ gender_queer : logi FALSE FALSE FALSE FALSE FALSE FALSE ...

## $ gender_not_sure: logi FALSE FALSE FALSE FALSE FALSE FALSE ...

## $ gender_unclear : logi FALSE FALSE FALSE FALSE FALSE FALSE ...

## $ gender_na : logi FALSE FALSE FALSE FALSE FALSE FALSE ...

## $ sex_at_birth : Factor w/ 2 levels "Female","Male": 1 1 1 1 1 2 1 2 1 1 ...

## $ hispanic : Factor w/ 3 levels "Don't know","No",..: 2 2 2 2 2 3 3 2 2 3 ...

## $ race : chr "White" "White" "Black or African American" "White" ...As a first step, let’s explore how many respondents selected more than one option:

gender <- gender %>%

mutate(responses = rowSums(across(starts_with("gender"))))

table(gender$responses)##

## 1 2 3 4 5 6

## 20050 200 34 1 3 17Here we used dplyr’s across function to say we want to take row sums of responses across all variables that start with “gender”. When summing,

TRUE values count as 1.

Observe that the vast majority of the respondents selected only one value. However prevalence of respondents with two or more values is not small when compared with the number of non-cisgender individuals (only 757 as we will see shortly). In particular, 17 individuals answered yes to six of the seven gender questions available, and those answers seem likely to be due to user confusion or error possibly leading to specificity error in our results.

Following Sell et. al., we compute a “transgender status” summary variable which labels respondents reporting:

- a current gender identity of male and being assigned male at birth as “Male (cisgender)”

- a current gender identity of female and being assigned female at birth as “Female (cisgender)”

- a current gender identity of male, transgender, or Genderqueer/Gender non-conforming and being assigned female at birth as “Male,Trans,GenQ/Female@Birth”

- a current gender identity of female, transgender, or Genderqueer/Gender non-conforming and being assigned male at birth as “Female,Trans,GenQ/Male@Birth”

We use dplyr’s case_when statement to select the cases.

gender <- gender %>%

mutate(trans_status = case_when(

sex_at_birth == "Male" & gender_male & responses == 1 ~

"Male (cisgender)",

sex_at_birth == "Female" & gender_female & responses == 1 ~

"Female (cisgender)",

sex_at_birth == "Female" & gender_male | gender_trans | gender_queer ~

"Male,Trans,GenQ/Female@Birth",

sex_at_birth == "Male" & gender_female | gender_trans | gender_queer ~

"Female,Trans,GenQ/Male@Birth"

))

gender %>%

group_by(trans_status) %>%

summarize(n())## # A tibble: 5 x 2

## trans_status `n()`

## <chr> <int>

## 1 Female (cisgender) 9733

## 2 Female,Trans,GenQ/Male@Birth 47

## 3 Male (cisgender) 9815

## 4 Male,Trans,GenQ/Female@Birth 396

## 5 <NA> 314We finish this example by making a table of trans status gender by race. For simplicity, we only look at respondents with a single answer to the race question and ignore the hispanic variable.

gender %>%

filter(!str_detect(race, ",")) %>%

mutate(trans = str_detect(trans_status, "Trans")) %>%

group_by(race) %>%

summarize(

respondents = n(),

trans_pct = round(100 * mean(trans, na.rm = TRUE), 1)

)## # A tibble: 6 x 3

## race respondents trans_pct

## <chr> <int> <dbl>

## 1 American Indian or Alaskan Native 170 9.1

## 2 Asian 1647 1.5

## 3 Black or African American 1212 2.1

## 4 None of the above 626 3.2

## 5 Some other race 842 3.6

## 6 White 13443 1.5Exercises

Exercises 6.1 - 6.2 require material through Section 6.2.

Consider the austen data set in the fosdata package.

This data frame contains the complete texts of Emma and Pride and Prejudice, with additional information which you can read about in the help page for the data set.

- Create a new data frame that consists only of the words in Emma.

- Create a new data frame that contains only the variables

word,word_lengthandnovel. - Create a new data frame that has the words in both books arranged in descending word length.

- Create a new data frame that contains only the longest words that appeared in either of the books.

- What was the mean word length in the two books together?

- Create a new data frame that consists only of the distinct words found in the two books, together with the word length and sentiment score variables. This requires you to use both

selectanddistinct.

Consider the barnacles data set in the fosdata package.

This data consists of the counts of the number of barnacles on coral reefs, the area of coral reef that was counted, the percent of coral cover, and the depth of the coral reef. More information can be found in the help page of fosdata::barnacles. Create a new data frame that contains, in addition to the other variables, a variable count_per_m, which is the number of barnacles divided by the area of reef.

Exercises 6.3 - 6.25 require material through Section 6.4.

Consider the mpg data set in the ggplot2 package.

- Which car(s) had the highest highway gas mileage? (For the purposes of this question, consider each observation a different car.)

- Compute the mean city mileage for compact cars.

- Compute the mean city mileage for each class of cars, and arrange in decreasing order.

- Which cars have the smallest absolute difference between highway mileage and city mileage? (For the purposes of this question, consider each observation a different “car”.)

- Compute the mean highway mileage for each year, and arrange in decreasing order.

This question uses the DrinksWages from the HistData package.

This data, gathered in 1910, was a survey of people working in various trades (bakers, plumbers, goldbeaters, etc.).

The trades are assigned class values of A, B, or C based on required skill. For each trade, he counted the number of workers who drink (drinks), number of sober workers (sober), and recorded wage information (wage).

There is also a column n = drinks + sober which is the total number of workers surveyed for each trade.

- Compute the mean wages for each class, A, B, and C.

- Find the three trades with the highest proportion of drinkers. Consider only trades with 10 or more workers in the survey.

Consider the data set oly12 from the VGAMdata package (that you will probably need to install).

It has individual competitor information from the Summer 2012 London Olympic Games.

- According to this data, which country won the most medals? How many did that country win? (You need to sum Gold, Silver, and Bronze)

- Which countries were the heaviest? Compute the mean weight of male athletes for all countries with at least 10 competitors, and report the top three.

Exercises 6.6 - 6.10 all use the movies data set from the fosdata library.

What is the movie with the highest mean rating that has been rated at least 30 times?

Which genre has been rated the most? (For the purpose of this, consider Comedy and Comedy|Romance completely different genres, for example.)

Which movie in the genre Comedy|Romance that has been rated at least 50 times has the lowest mean rating? Which has the highest mean rating?

Which movie that has a mean rating of 4 or higher has been rated the most times?

Which user gave the highest mean ratings?

Exercises 6.11 - 6.16 all use the Batting data set from the Lahman library.

This gives the batting statistics of every player who has played baseball from 1871 through the present day.

Which player has been hit-by-pitch the most number of times?

How many doubles were hit in 1871?

Which team has the most total number of home runs, all time?

Which player who has played in at least 500 games has scored the most number of runs per game?

- Which player has the most lifetime at bats without ever having hit a home run?

- Which active player has the most lifetime at bats without ever having hit a home run? (An active player is someone with an entry in the most recent year of the data set).

Make sure to take into account stint in these problems.

- Verify that Curtis Granderson hit the most triples in a single season since 1960.

- In which season did the major league leader in triples have the fewest triples?

- In which season was there the biggest difference between the major league leader in stolen bases (SB) and the player with the second most stolen bases?

Exercises 6.17 - 6.24 all use the Pitching data set from the Lahman library.

This gives the pitching statistics of every pitcher who has played baseball from 1871 through the present day.

- Which pitcher has won (W) the most number of games?

- Which pitcher has lost (L) the most number of games?

Which pitcher has hit the most opponents with a pitch (HBP)?

Which year had the most number of complete games (CG)?

Among pitchers who have won at least 100 games, which has the highest winning percentage? (Winning percentage is wins divided by wins + losses.)

Among pitchers who have struck out at least 500 batters, which has the highest strikeout to walk ratio? (Strikeout to walk ratio is SO/BB.)

List the pitchers for the St Louis Cardinals (SLN) in 2006 with at least 30 recorded outs (IPouts), sorted by ERA from lowest to highest.

A balk (BK) is a subtle technical mistake that a pitcher can make when throwing. What were the top five years with the most balks in major league baseball history? Why was 1988 known as “the year of the balk”?

Which pitcher has the most outs pitched (IPouts) of all the pitchers who have more bases on balls (BB) than hits allowed (H)? Who has the second most?

M&M’s are small pieces of candy that come in various colors and are sold in various sizes of bags. One popular size for Halloween is the “Fun Size,” which typically contains between 15 and 18 M&M’s. For the purposes of this problem, we assume the mean number of candies in a bag is 17 and the standard deviation is 1. According to Rick Wicklin38, the proportion of M&M’s of various colors produced in the New Jersey M&M factory are as follows.

| Color | Proportion |

|---|---|

| Blue | 25.0 |

| Orange | 25.0 |

| Green | 12.5 |

| Yellow | 12.5 |

| Red | 12.5 |

| Brown | 12.5 |

Suppose that you purchase a big bag containing 200 Fun Size M&M packages. The purpose of this exercise it to estimate the probability that each of your bags has a different distribution of colors.

- We will model the number of M&M’s in a bag with a binomial random variable. Find values of \(n\) and \(p\) such that a binomial random variable with parameters \(n\) and \(p\) has mean approximately 17 and variance approximately 1.

- (Hard) Create a data frame that has its rows being bags and columns being colors, where each entry is the number of M&M’s in that bag of that color. For example, the first entry in the first column might be 2, the number of Blue M&M’s in the first bag.

- Use

nrowsanddplyr::distinctto determine whether each row in the data frame that you created is distinct. - Put inside

replicateand estimate the probability that all bags will be distinct. This could take some time to run, depending on how you did parts b and c, so start with 500 replicates.

Exercises 6.26 - 6.32 require material through Section 6.5.

The data set words is built in to the stringr package.

- How many words in this data set contain “ff”

- What percentage of these words start with “s”?

The data set sentences is built in to the stringr package.

- What percentage of sentences contain the string “the”?

- What percentage of sentences contain the word “the” (so, either “the” or “The”)?

- What percentage of sentences start with the word “The”?

- Find the one sentence that has both an “x” and a “q” in it.

- Which words are the most common words that a sentence ends with?

The data set fruit is built in to the stringr package.

- How many fruits have the word “berry” in their name?

- Some of these fruit have the word “fruit” in their name. Find these fruit and remove the word “fruit” to create a list of words that can be made into fruit. (Hint: use

str_remove)

Exercises 6.29 - 6.31 require the babynames data frame from the babynames package.

Say that a name is popular if it was given to 1000 or more babies of a single sex. How many popular female names were there in 2015? What percentage of these popular female names ended in the letter ‘a’?

Consider the babynames data set. Restrict to babies born in 2003. We’ll consider a name to be gender neutral if the number of male babies given that name is within plus or minus 20 percent of the number of girl babies given that name. What were the 5 most popular gender neutral names in 2003?

The phonics package has a function metaphone that gives a rough phonetic transcription of English words.

Restrict to babies born in 2003, and create a new variable called phonetic, which gives the phonetic transcription of the names.

- Filter the data set so that the year is 2003 and so that each phonetic transcription has at least two distinct names associated with it.

- Filter the data so that it only contains the top 2 most given names for each phonetic transcription.

- Among the pairs of names for girls obtained in the previous part with the same metaphone representation and such that each name occurs less than 120 percent of the times that the other name occurs, which pair of names was given most frequently?

- We wouldn’t consider the most common pair to actually be the “same name.” Look through the list sorted by total number of times the pair of names occurs, and state which pair of names that you would consider to be the same name is the most common. That pair is, in some sense, the hardest to spell common name from 2003.

- What is the most common hard to spell triple of girls names from 2003? (Answers may vary, depending on how you interpret this. To get interesting answers, you may need to loosen the 120 percent rule from the previous part.)

In this exercise, we examine the expected number of tosses until various patterns occur.

For each pattern given below, find the expected number of tosses until the pattern occurs.

For example, if the pattern is HTH and you toss HHTTHTTHTH, then it would be 10 tosses until HTH occurs.

With the same sequence of tosses, it would be 5 tosses until TTH occurs.

(Hint: paste(coins, collapse = "") converts a vector containing H and T into a character string on which you can use the stringr commands such as str_locate.)

- What is the expected number of tosses until HTH occurs?

- Until HHT occurs?

- Until TTTT occurs?

- Until THHH occurs?

Exercises 6.33 - 6.35 require material through Section 6.6.

This exercise uses the billboard data from the tidyr package.

- Which artist had the most tracks on the charts in 2000?

- Which track from 2000 spent the most weeks at #1? (This requires tidying the data as described in section 6.6.1)

Consider the scrabble and letter_frequency data sets in the fosdata package.

- Create a new data frame that contains the variables

letter,points,number, andfrequencyfor all english letters a through z.numbershould be the number of tiles in a Scrabble set with that letter, andfrequencyshould be the letter frequency fromletter_frequency - Which letter has the largest absolute difference between the percentage of tiles that are that letter and the frequency of that letter occurring in

letter_frequency? Note that the frequency of tiles in Scrabble should be related to the frequency of letters in the dictionary. The data setletter_frequencygives the frequency in texts, so we would not expect the frequencies to match.

Consider the scotland_births data set in the fosdata package.

This data gives the number of births by the age of the mother in Scotland for each year from 1945-2019. This data is in wide format. (Completion of this exercise will be helpful for Exercise 7.26.)

- Convert the data into long format with three variable names:

age,yearandnumber, where each observation is thenumberof babies born inyearto mothers that areageyears old. - Convert the year to integer by removing the

xand usingas.integer. - Which year had the most babies born to mothers 20 years old or younger?

Exercise 6.36 requires material through Section 6.7.

Suppose you sample five numbers from a uniform distribution on the interval \([0, 1]\). Use simulation to show that the expected value of the \(k\)th smallest of the five values is \(\frac{k}{6}\). That is, the minimum of the 5 values has expected value 1/6, the second smallest of the values has expected value 2/6, and so on.

Loading

dplyrproduces warning messages because thedplyrpackage contains commands that override some base R commands (notably thefiltercommand). This book will not show these warnings. UsesuppressMessages(library(dplyr))if you want to load the package quietly.↩︎F. Maxwell Harper and Joseph A. Konstan. 2015. The MovieLens Datasets: History and Context. ACM Transactions on Interactive Intelligent Systems (TiiS) 5, 4: 19:1–19:19. https://doi.org/10.1145/2827872↩︎

Rating should almost certainly have been reclassified as an ordered factor, and it is not clear that taking the mean is really a valid thing to do.↩︎

Berg-Weger, Marla (2016). Social Work and Social Welfare: An Invitation. Routledge. p. 229. ISBN 978-1317592020.↩︎

Meerwijk EL, Sevelius JM. Transgender Population Size in the United States: a Meta-Regression of Population-Based Probability Samples. Am J Public Health. 2017;107(2):e1-e8. doi:10.2105/AJPH.2016.303578↩︎

Chokshi, N., “One in Every 137 Teenagers Would Identify as Transgender, Report Says”. New York Times, Feb. 23, 2017.↩︎

The GenIUSS Group. (2014). Best practices for asking questions to identify transgender and other gender minority respondents on population-based surveys. J.L. Herman (ed.). Los Angeles, CA: the Williams Institute.↩︎

Sell R, Goldberg S, Conron K (2015) The Utility of an Online Convenience Panel for Reaching Rare and Dispersed Populations. PLOS ONE 10(12): e0144011. https://doi.org/10.1371/journal.pone.0144011↩︎